Scaling Choice through Simulation Twinning

By

Ross Peat, Gousto VP of Data;

Fabrice Durier, Gousto Senior Principal Data Scientist;

David Buxton, Decision Lab CEO & Member of TechUK Digital Twins Council

The Mission: It Starts with the Customer

At Gousto, technology doesn’t exist for technology’s sake; it exists to answer a simple but ambitious mission: “To be the most loved way to eat dinner”. To achieve this, Gousto must offer its customers unparalleled choice. As part of this endeavour, it set a strategic goal to scale its weekly menu offering from 40 to 200 recipes.

Menu size scaling

However, in the world of logistics, more choice translates immediately to exponential complexity. Fulfilling 200,000 boxes per week means packing one box every 3 seconds. And with each containing around 50 ingredients, moving to 200 recipes created an explosion of inventory combinations.

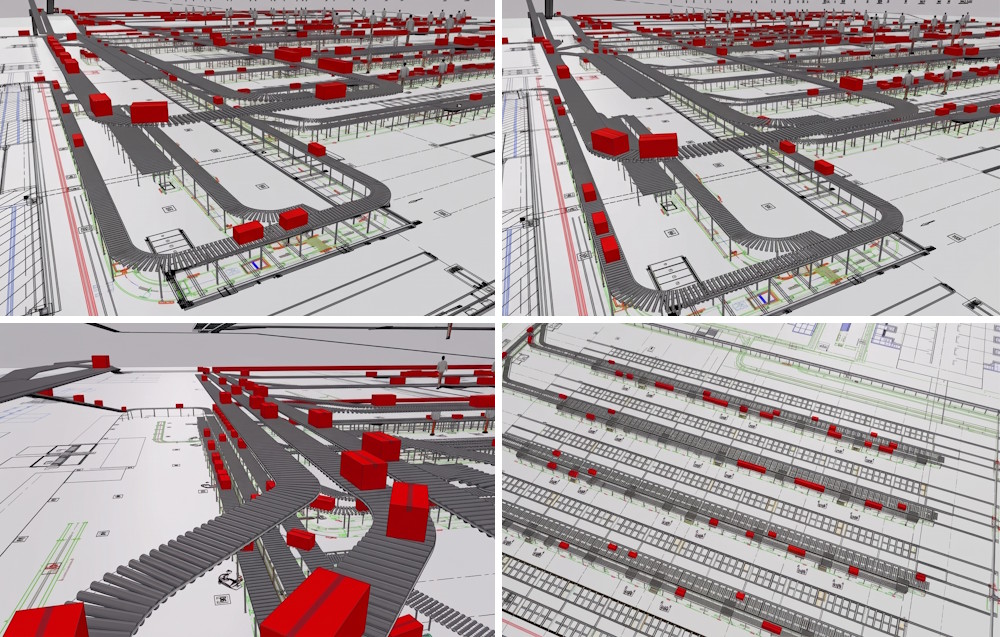

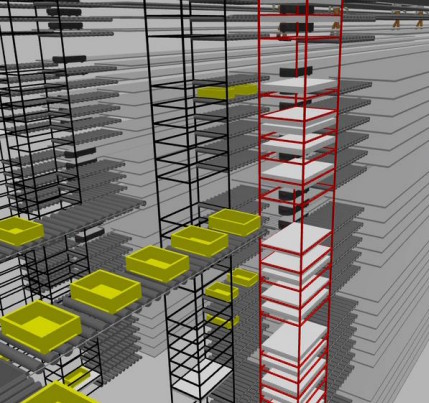

The challenge wasn’t just culinary; it was a high-stakes engineering puzzle. While Gousto already possessed a simulation model of the picking process for the customer-bound Red Boxes, this was only part of the picture. Every simulation run of the model made simplistic assumptions about stock supply. To truly understand the cascading impact of small inefficiencies, Gousto needed to model warehouse stock replenishment. This led them to create a simulation of Blue Totes travelling via spine conveyors, lifts, and shuttles.

The Approach: Simulation Twinning

The answer lay in Simulation Twinning. Over an ambitious 5-month sprint encompassing 24 epics and over 300 tickets, Gousto and Decision Lab partnered to develop ToteSim, a high-fidelity, data-driven model of the Warrington facility’s blue tote replenishment system. Doing so set the foundations for a digital twin while more quickly realising the benefits of replay and prediction.

Rather than building an isolated tool, the team designed a modular architecture that merged the existing Red Box simulation with the new Blue Tote simulation. Internally dubbed the Red + Blue = Purple interface, this allows the two systems to operate independently or in tandem. Operations teams can now run flexible scenarios, such as passing historic picking data into the Red simulation while running infinite stock scenarios in the Blue simulation, to isolate bottlenecks without constraints.

A Living System: Data Science Integration and Stress Testing

To ensure the simulation acts as true to reality as possible, it is deeply integrated with Gousto’s proprietary data science products. Every five minutes of simulated time, ToteSim calls a replenishment service wrapper to run the replenishment and pick face algorithms, recalculating stock to decant and updating tray quantities exactly as the real warehouse software would.

This high-fidelity environment allows Gousto to stress-test their physical and digital infrastructure in unprecedented ways such as simulating machine failures so that engineers can independently configure all 20 lifts and 270 shuttles to fail based on historic, synthetic, or stochastic data, measuring how the warehouse recovers from catastrophic breakdowns.

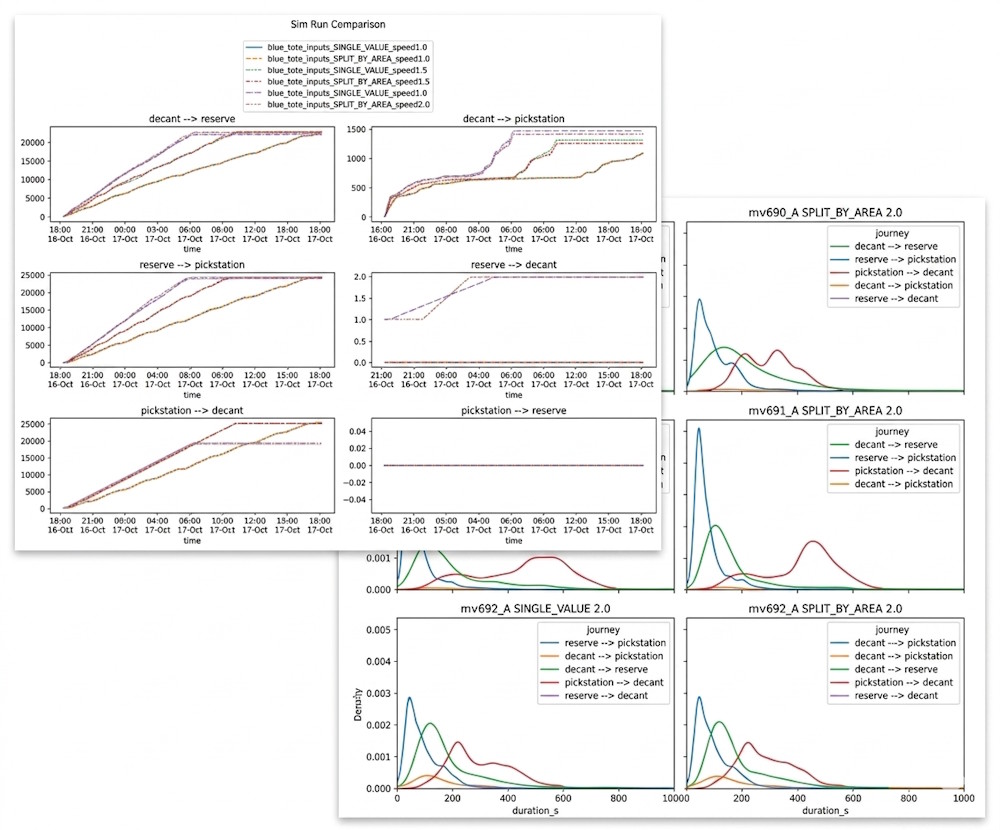

The Application: De-risking Innovation and Accelerating ROI

With the ability to run hundreds or even thousands of simulations per hour, ToteSim has successfully simulated hundreds of millions of boxes and tens of billions of picks. But a model is only as good as the trust placed in it. By feeding the model historical production data to replay past shifts, the simulation regularly achieves accuracy in the 80-90% range. Highly critical operational pathways hit exceptional precision above 95% accuracy.

The simulation quickly became the testbed for critical infrastructure decisions. For example, when exploring how to optimise spine conveyor throughput, the team hypothesised that increasing tote counter limit variables would boost performance. The simulation rapidly revealed that the one proposed variant performed almost identically to the baseline. By identifying this digitally, Gousto avoided unnecessary and disruptive physical reconfiguration.

Furthermore, pairing this simulation with Gousto’s advanced Gurobi optimisation models allows the data science team to safely test iterative improvements, with real-life results matching simulated expectations.

The Future: A Journey to Real-Time

For Gousto, this simulation twin is not the destination, but a foundational step in a longer journey. Building upon the knowledge and learnings from this initial project, the team quickly initiated a second iteration. In just three months, an end-to-end model of Gousto’s second fulfilment site was successfully built and delivered. This rapid deployment has drastically broadened the scope and range of operational scenarios that can now be tested at scale and pace.

The current success proves the value of simulation within the context of Digital Twins. As the network scales, the ultimate ‘North Star’ ambition is to seamlessly merge live operations and simulations, evolving this capability into a real-time, IoT-based Digital Twin that offers live visibility and automated factory control.

The lesson for the wider industry is clear: you don’t need to start with a perfect, real-time asset to generate massive operational ROI. By focusing on simulation twinning (the active process of simulation, validation, and experimentation) Gousto turned a complex scaling challenge into a competitive advantage, ensuring their technology constantly serves an exceptional customer experience.